For years, the AI ecosystem has been paralyzed by the O(N^2) integration problem. Every new model required bespoke glue code for every distinct data source. MCP shatters this bottleneck, introducing a universal standard that is redefining enterprise Agentic AI.

1. The O(N^2) Integration Nightmare

Before MCP, building an AI agent meant wrestling with fragmented, brittle frameworks. If you wanted Claude, GPT-4, and a local Llama model to access your PostgreSQL database, Jira API, and local filesystem, you had to write and maintain nine separate integrations using wrappers like LangChain or LlamaIndex.

This N-to-N connectivity problem meant engineering teams spent 80% of their time maintaining API endpoints and prompt injections, and only 20% actually building intelligent behaviors. The ecosystem desperately needed a "USB-C" port for AI context.

2. Enter the Model Context Protocol

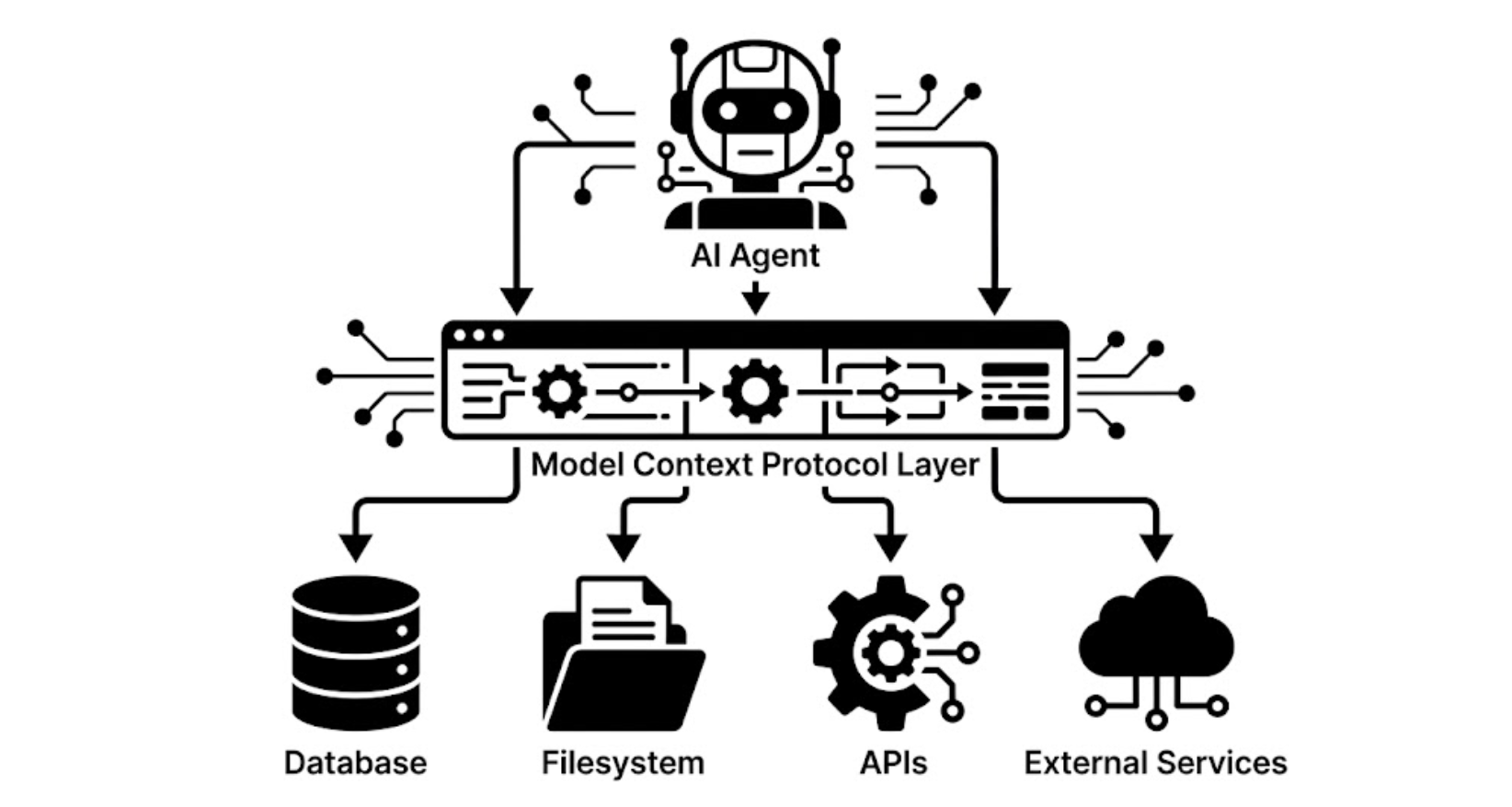

Open-sourced by Anthropic, the Model Context Protocol (MCP) is a standardized, open protocol that dictates how AI models access external data and tools. Instead of building custom connectors, developers build a single, lightweight MCP Server.

This server translates your proprietary data and backend functions into a standardized JSON-RPC 2.0 format. Any MCP-compliant Client (like Claude Desktop, Cursor, or your custom orchestrator) can immediately connect to this server over standard transport layers (like local stdio or remote SSE/HTTP) and seamlessly discover its capabilities.

3. Legacy Architecture vs. MCP Standard

| Architectural Feature | Legacy (LangChain/Custom Glue) | Model Context Protocol (MCP) |

|---|---|---|

| Integration Scaling | O(N^2) - Every model needs a custom connector to every tool. | O(1) - Write an MCP server once; any compliant client can use it. |

| Authentication | Hardcoded into the agent's environment or prompt. Highly insecure. | Handled securely on the Server side. Client never sees credentials. |

| Protocol | Proprietary Python/TS library calls. | Language-agnostic JSON-RPC 2.0. |

| Tool Discovery | Manual prompt engineering and hardcoded tool descriptions. | Dynamic capability negotiation at runtime. |

4. The Three Pillars of MCP

An MCP Server does not simply grant raw, unmanaged access to a database. It structures the AI's interaction into three strictly defined primitives, enabling an unprecedented level of programmatic control:

-

Resources (Read-Only Context): Exposes data that the LLM can read via standardized URIs (e.g.,

file:///logs/error.logorpostgres://schema/tables). Resources can be static files, live database snapshots, or even subscribing to real-time events. -

Tools (Executable Actions): Exposes specific functions the LLM can call to mutate state or fetch dynamic data. Tools come with strict JSON Schema validations for their arguments (e.g.,

execute_sql_query(query: string)orcreate_github_issue(title, body)). - Prompts (Standardized Templates): Reusable, dynamic prompt templates managed strictly on the server side. This allows enterprise engineering teams to enforce standard operating procedures (SOPs) and context without bloating the client-side UI.

5. Advanced Paradigms: Roots and Sampling

As the protocol has matured, it has expanded to include complex bidirectional communication, allowing the server and client to negotiate boundaries and tasks dynamically.

Roots (Client-Side Boundaries)

An MCP server might need to know which local directories it is allowed to access. The Client can expose "Roots"—explicit URIs defining the boundary of the server's sandbox—ensuring the AI cannot read files outside the user's active workspace.

Sampling (Server-to-LLM Requests)

In highly advanced setups, an MCP Server can pause its own execution to request an LLM completion from the Host application. This allows the server to intelligently summarize a massive dataset locally before sending a highly compressed payload back to the primary agent.

Code Execution Sandbox

Instead of passing massive datasets over JSON-RPC, modern MCP servers provide a secure sandbox where the LLM can write and execute Python/JS code to analyze data locally, reducing token costs by 90%.

6. Why Enterprise InfoSec Mandates MCP

Perhaps the greatest triumph of MCP is its security posture. Because of its decoupled nature, it fundamentally solves the "rogue agent" problem.

Progressive Disclosure

Intermediate data processing happens on the server. PII (like real email addresses from a database) can be passed to an internal anonymization tool without ever entering the LLM's context window or leaving your VPC.

Human-in-the-Loop Governance

Because MCP uses explicit tool-calling schemas, the client UI can automatically intercept requests. Security teams can mandate that any tool flagged as "destructive" (e.g., DROP TABLE, SEND_EMAIL) pauses the agent and requires an explicit human click to proceed.